Data Science Lab has been actively engaged in the following research and development activities assigned from commissions and self-initiated tasks.

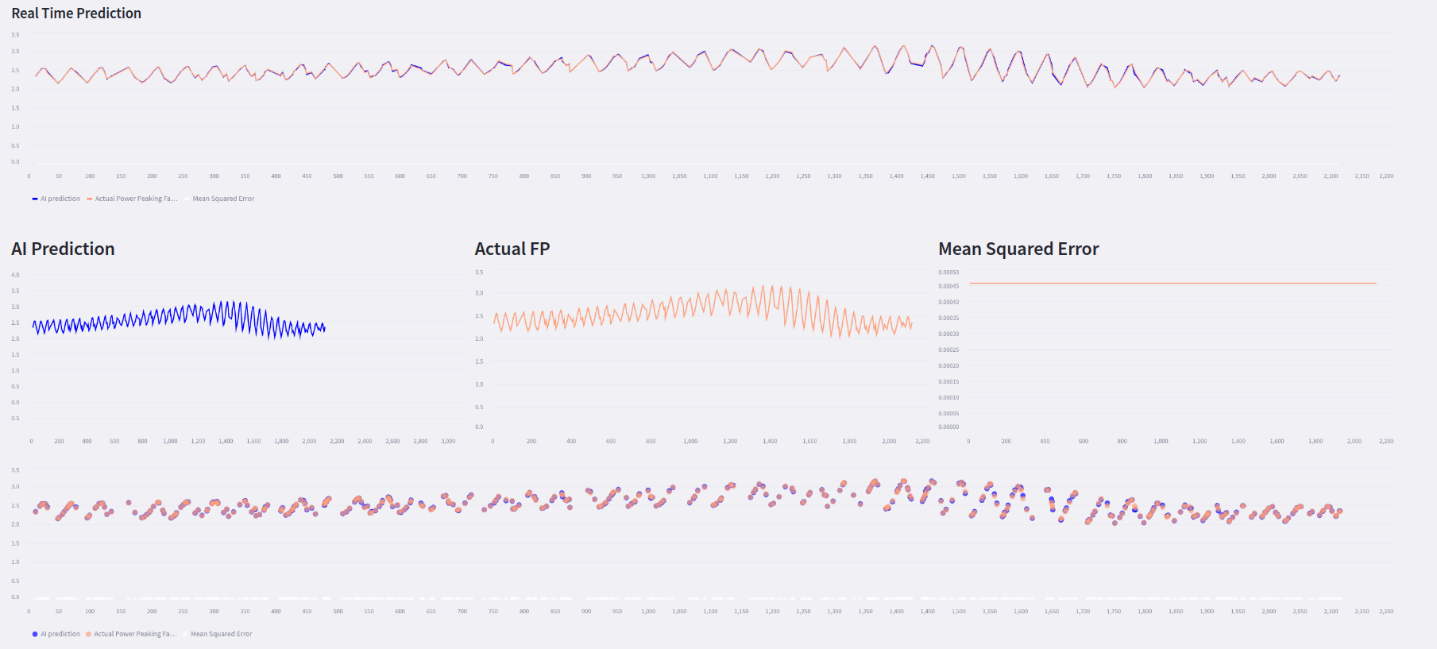

Reactor Core Surveillance of NPPs using Artificial Intelligence:

The integration of machine learning techniques in reactor core monitoring enables system enhances safety and efficiency in nuclear power plants. By analyzing vast amounts of sensor data, Artificial Intelligence algorithms can predict anomalies, optimize reactor performance, and facilitate proactive maintenance. This approach not only improves operational reliability but also reduces the risk of unforeseen failures. The application of AI in this domain marks a significant advancement in nuclear technology.

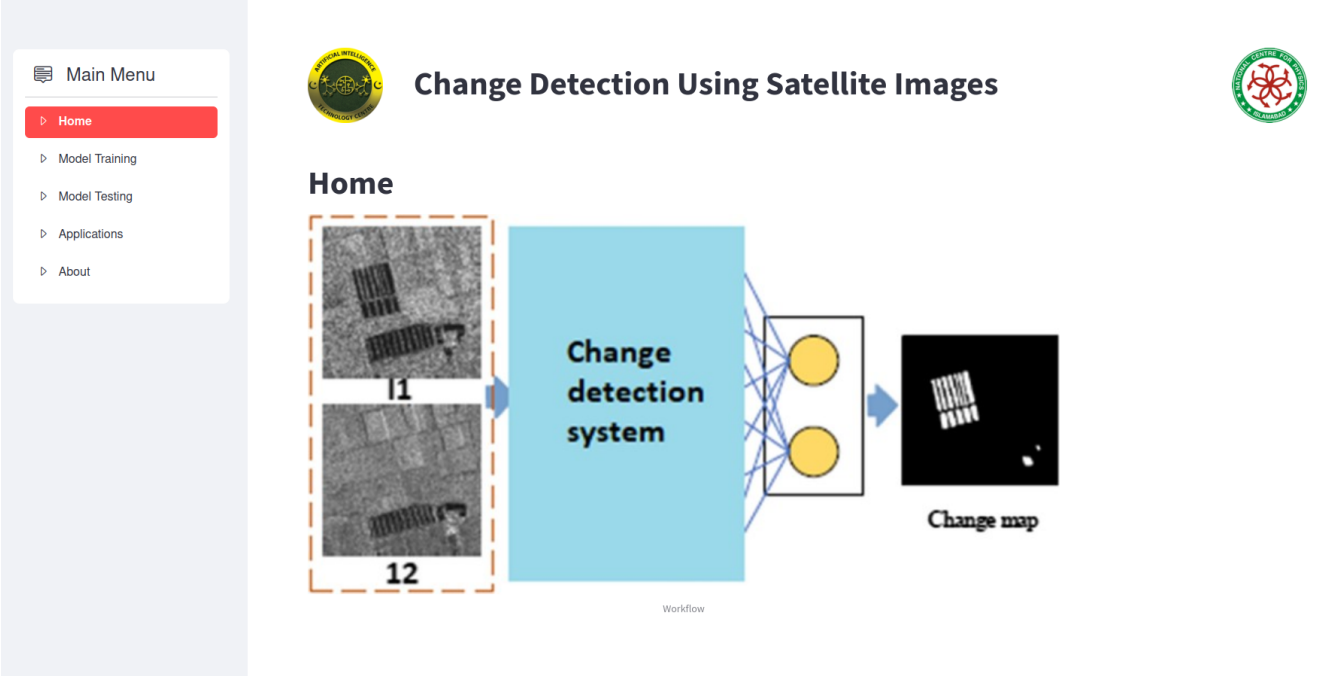

Model Development of Deep Learning-Based Change Detection from Satellite Imagery

Change detection in satellite imagery is a fundamental process for monitoring changes in the Earth's surface over time. By analyzing images acquired from satellites at different intervals, change detection algorithms identify and highlight areas where significant alterations have occurred, ranging from urban expansion and deforestation to natural disasters and environmental changes. Through preprocessing, image registration, and algorithm implementation, these techniques provide valuable insights for urban planning, land management, environmental monitoring, and disaster assessment, aiding decision-makers in understanding trends and implementing strategies for sustainable development and mitigation efforts.

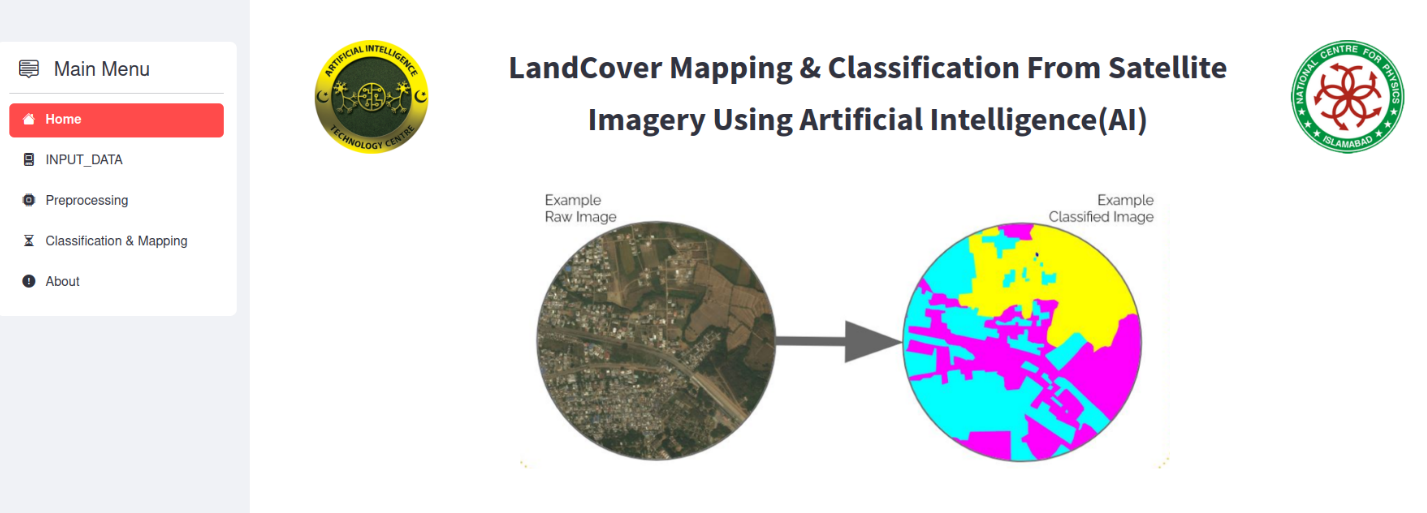

Model Development of Deep Learning Land Cover Classification from Satellite Imagery

Land Cover data are an important input for ecological, hydrological, and agricultural models. That Application is developed by Data Science Lab AITeC from Landsat imagery. However, these data have traditionally had large temporal gaps (~5 years) as they are computationally intensive to create. More temporally granular land cover data are needed for a studying a rapidly changing environment. We used Semantic Segmentation techniques where every pixel in an image is given a label of a corresponding class for Sensing and identifying different classes of land use from satellite imagery to solve the problem.

Model Development of Deep Leaning Based Semantic Segmentation of Satellite Imagery Data

Semantic segmentation analyzes satellite images to classify land types, aiding applications like urban planning and environmental tracking. This task faces challenges like low resolution and complex object variations. We explored existing deep learning methods and developed a custom architecture optimized for object detection in satellite imagery.

Status: Proof of concept completed the phase 1 of the project and developed the GUI interface.

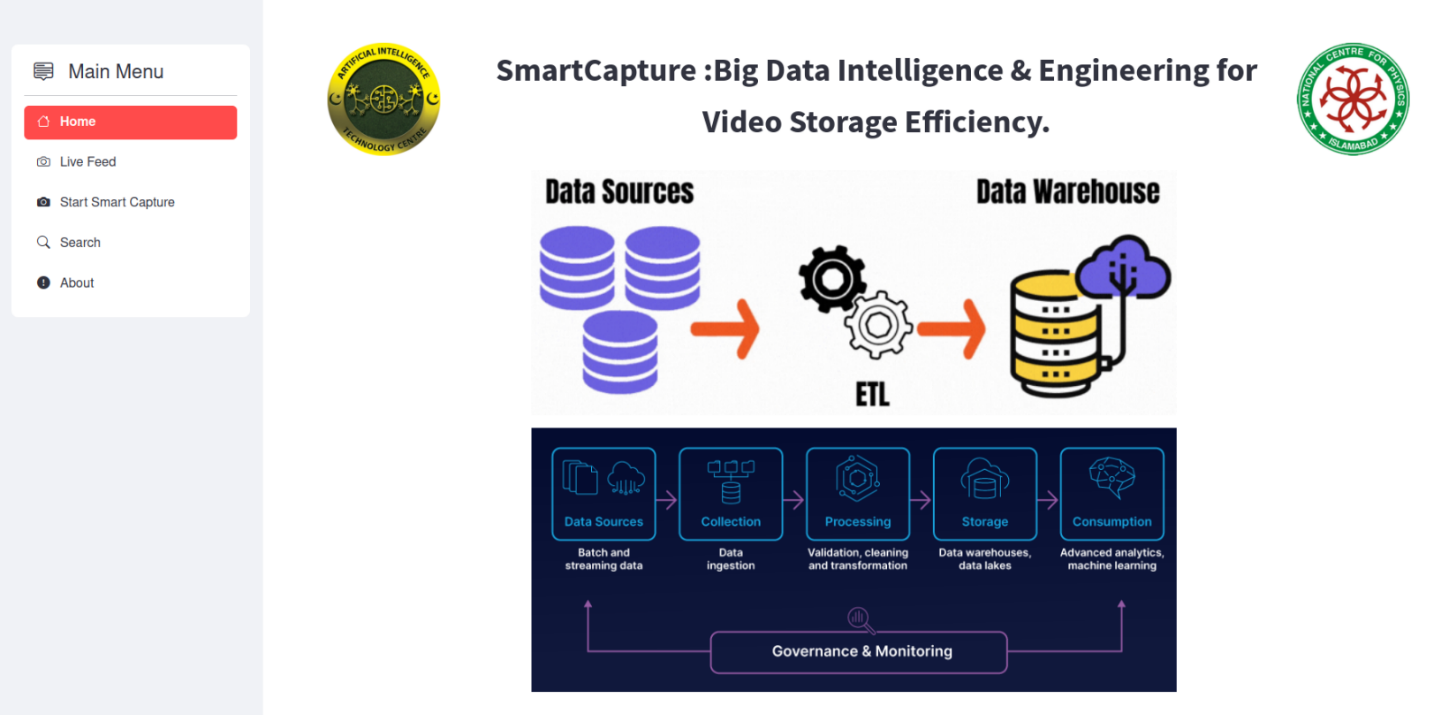

Smart Capture: Big Data Intelligence & Engineering for Video Storage Efficiency

Surveillance systems generate vast amounts of video data, placing a significant burden on storage capacity. Traditionally, surveillance footage is stored on hard disk drives, and due to limited storage capacities, it often necessitates periodic deletion. To tackle this issue, we have introduced an innovative method named Smart Capture Big Data Intelligence & Engineering for enhancing Video Storage Efficiency.

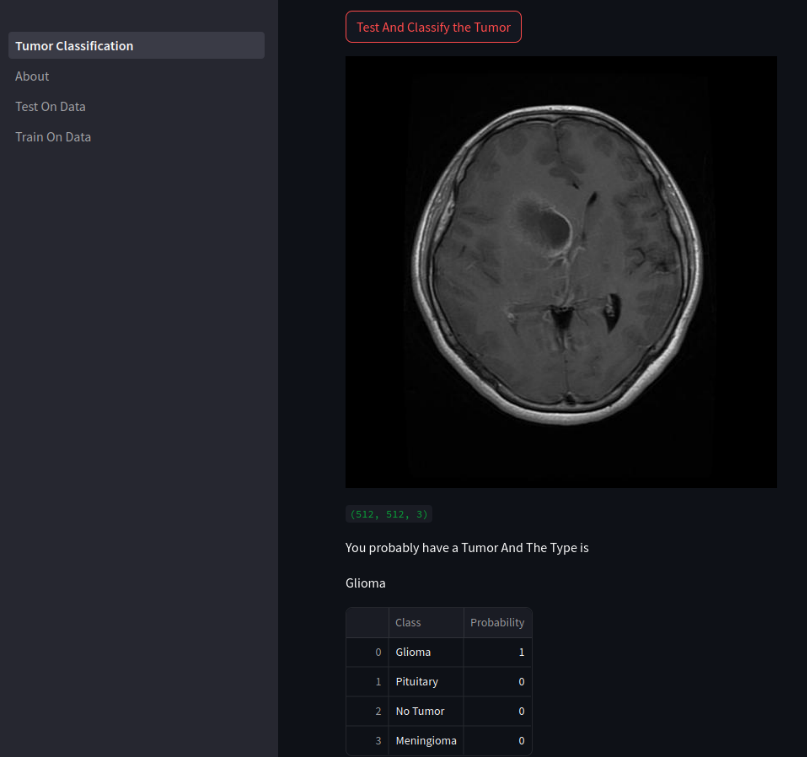

Brain Tumor Classification based on MRI Images

Deep learning is transforming brain tumor classification by acting as a powerful tool for analyzing MRI images. Convolutional neural networks, a type of deep learning algorithm, are particularly adept at this task. By sifting through vast amounts of labeled MRI scans, these algorithms can learn intricate patterns that distinguish healthy brain tissue from various tumor types. Deep learning models offer the promise of improved diagnostic accuracy, surpassing human ability in some cases. Additionally, these models can significantly reduce the time doctors spend analyzing complex scans, freeing up valuable time for patient care. Perhaps most significantly, deep learning's ability to detect subtle abnormalities may lead to earlier identification of tumors, allowing for swifter intervention and potentially improving patient outcomes.

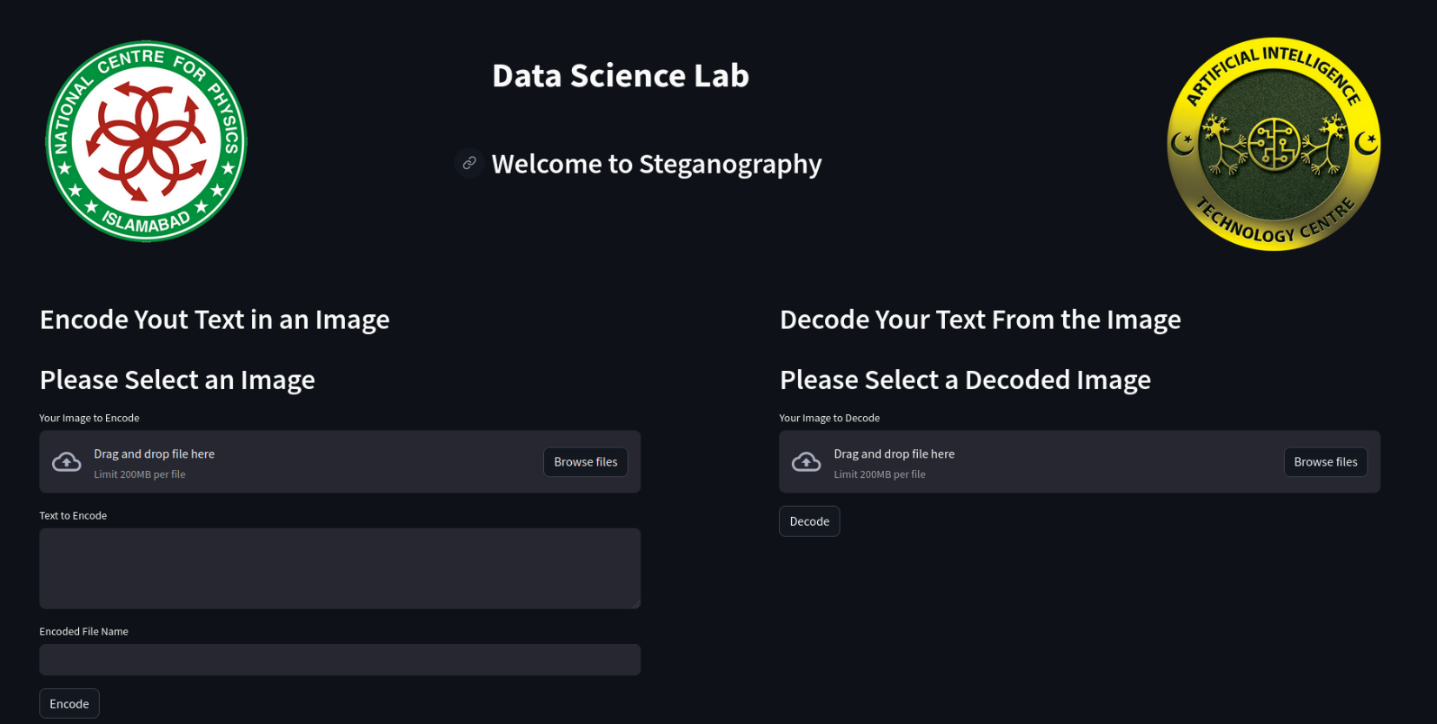

Image Steganography: Hiding Text Messages in Images

Steganography, the art of hiding data in plain sight, can be achieved through image steganography. Here, secret messages are tucked away within digital images by slightly modifying pixels in a way undetectable to the naked eye. This allows the image to appear normal while containing a hidden message, potentially useful for covert communication or secure data storage.

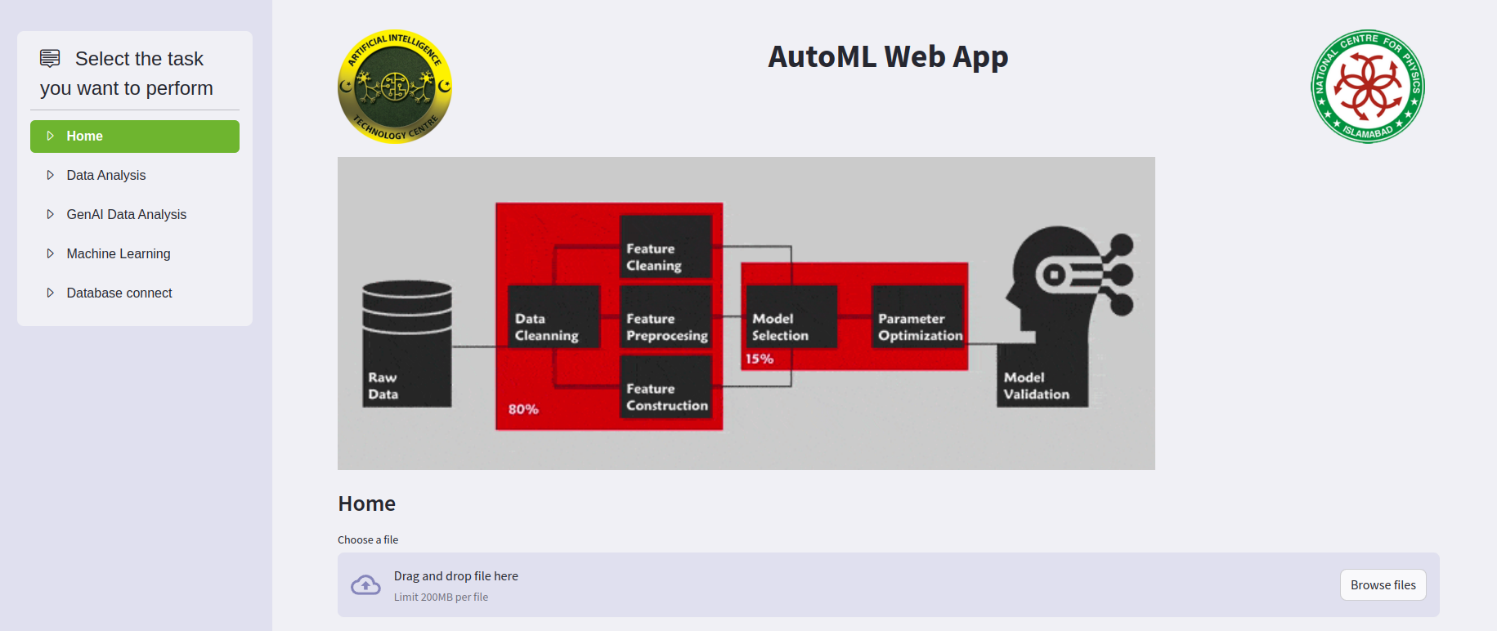

Development of Prototype of AutoML Tool

The AutoML Web App simplifies the development of AI models through an intuitive graphical interface. It streamlines the entire process, from data analysis both with or without AI assistance to machine learning model creation. Users can easily upload their data and let the app handle the complex tasks, eliminating the need for extensive coding knowledge.

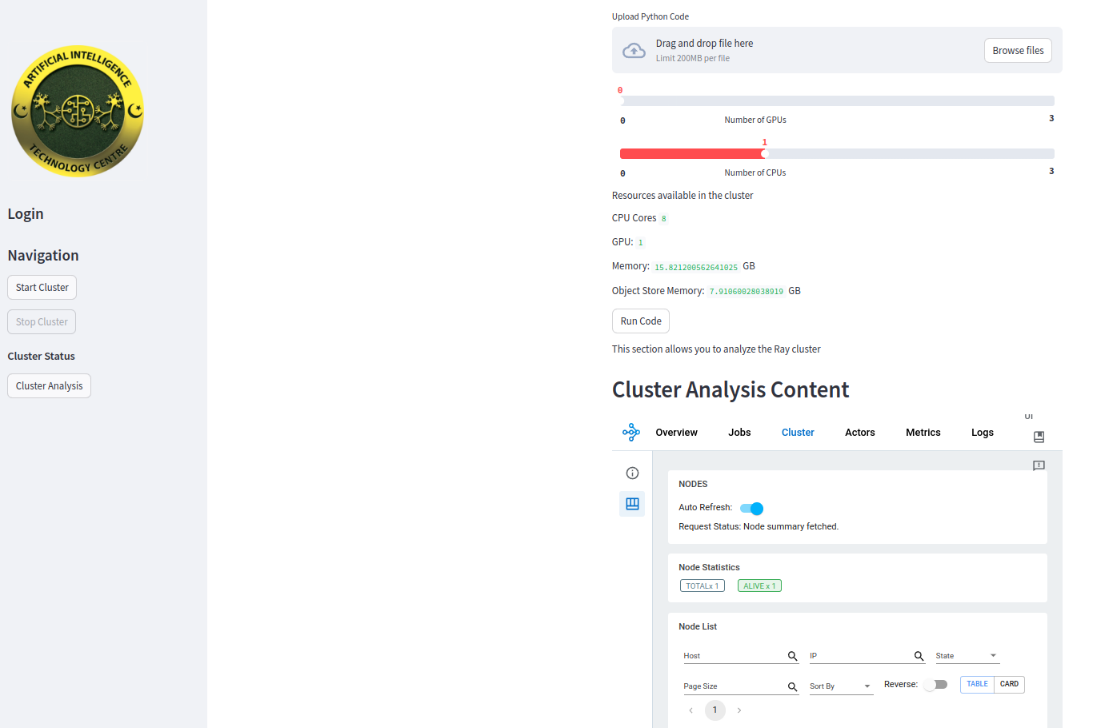

GPU based AITEC Cluster System

GPU-based AITEC Cluster System Data Science Lab is a high-performance computing environment designed for data science and artificial intelligence workloads. It utilizes multiple GPU-enabled systems in a distributed architecture to accelerate complex computations by parallelizing training and inference tasks across multiple independent GPU system.

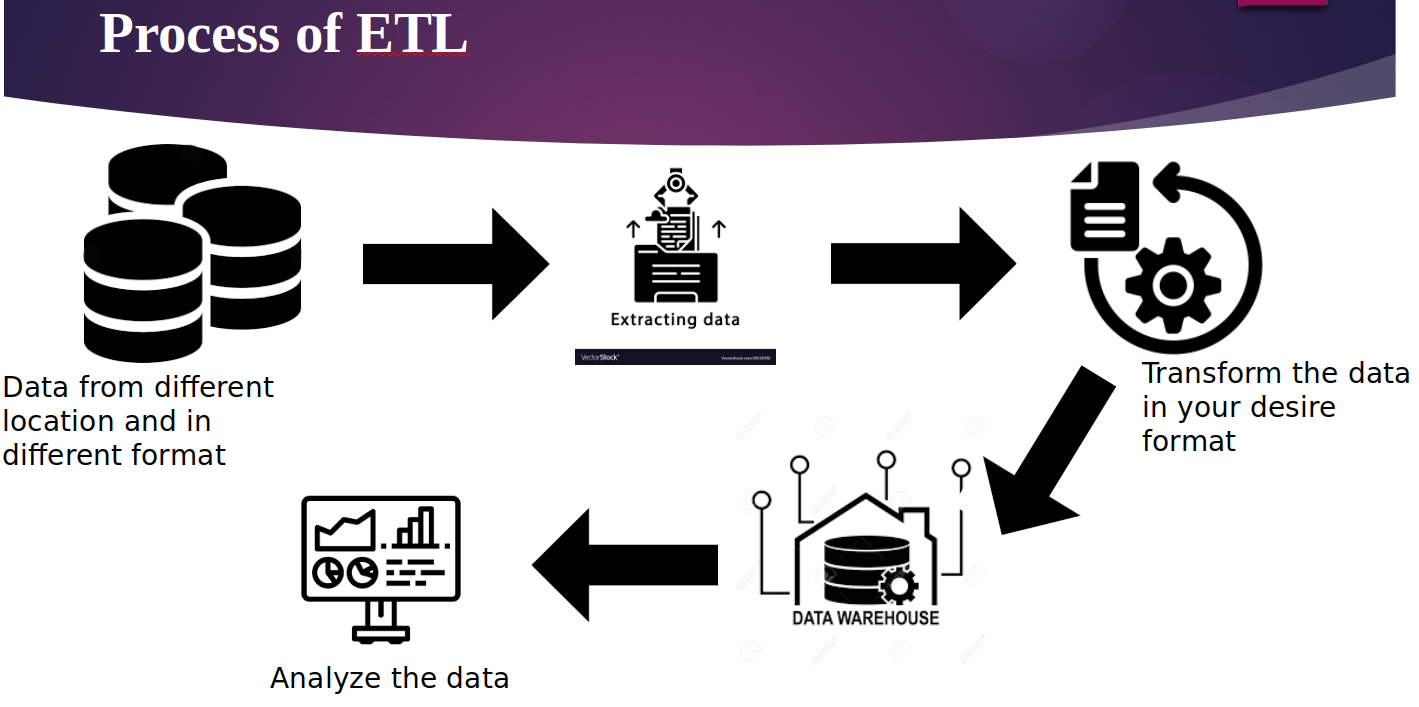

Development of ETL Pipeline

The development of an Extract, Transform, Load (ETL) pipeline is critical for managing and processing large volumes of data from various sources. This pipeline automates data extraction, transformation into a suitable format, and loading into a data warehouse. By ensuring data consistency, quality, and accessibility, an efficient ETL pipeline supports robust data analysis and decision-making processes. Its implementation is fundamental in achieving streamlined data workflows and comprehensive business intelligence.

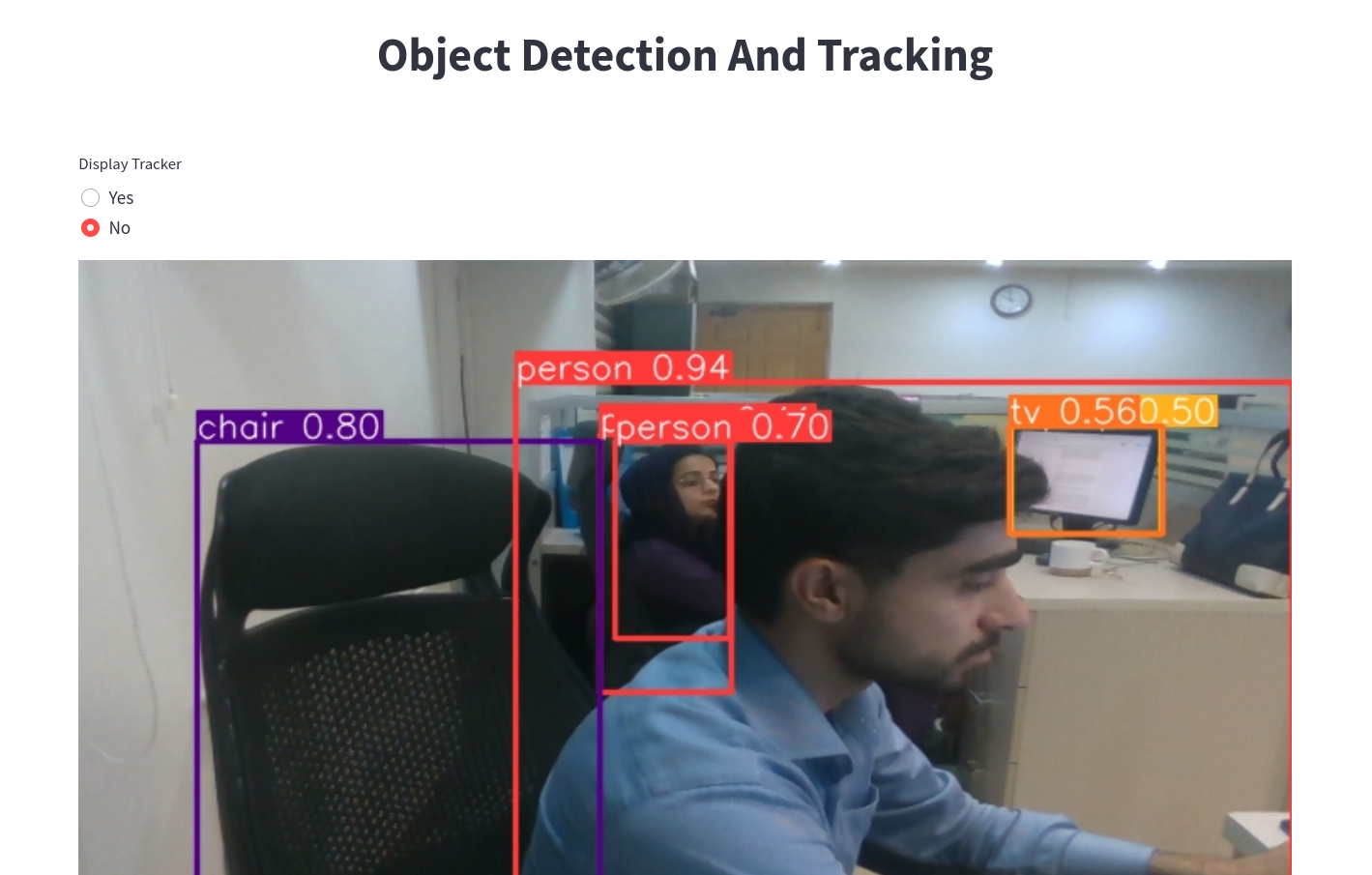

Development of Real Time Object Detection using Aerial Imagery

Real-time object detection on aerial imagery involves leveraging advanced computer vision techniques to identify and classify objects from high-altitude images. This technology is pivotal in applications such as surveillance, disaster management, and environmental monitoring. By processing aerial images in real-time, the system provides immediate insights and actionable data, enhancing situational awareness and operational response. The development of such systems represents a leap forward in remote sensing capabilities.

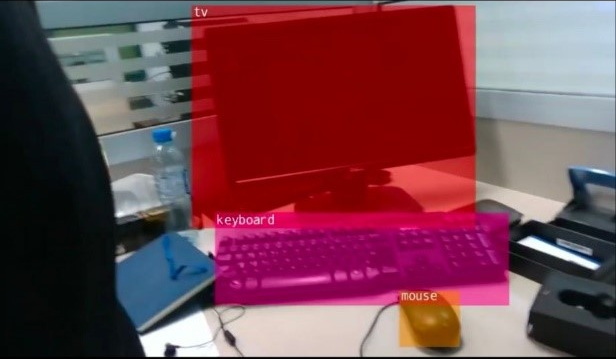

Development of Real Time Semantic Segmentation of Objects from Live Camera Feed

The real-time semantic segmentation of objects from live camera feeds employs deep learning to accurately classify and delineate objects within a video stream. This technology is essential in fields such as autonomous driving, robotics, and augmented reality. By enabling precise object identification and context-aware analysis, it enhances the interaction between machines and their environments. The development of this system ensures that live video data can be effectively utilized for real-time decision-making.

Development of Data Mining Tool on Time Series Data

Creating a data mining tool for time series data involves designing algorithms that can uncover patterns, trends, and anomalies in sequential datasets. This tool is instrumental in various domains, including finance, healthcare, and manufacturing, where understanding temporal relationships is crucial. By providing insights into historical data and forecasting future events, the tool aids in predictive analytics and strategic planning. Its development underscores the importance of temporal data analysis in modern data science.

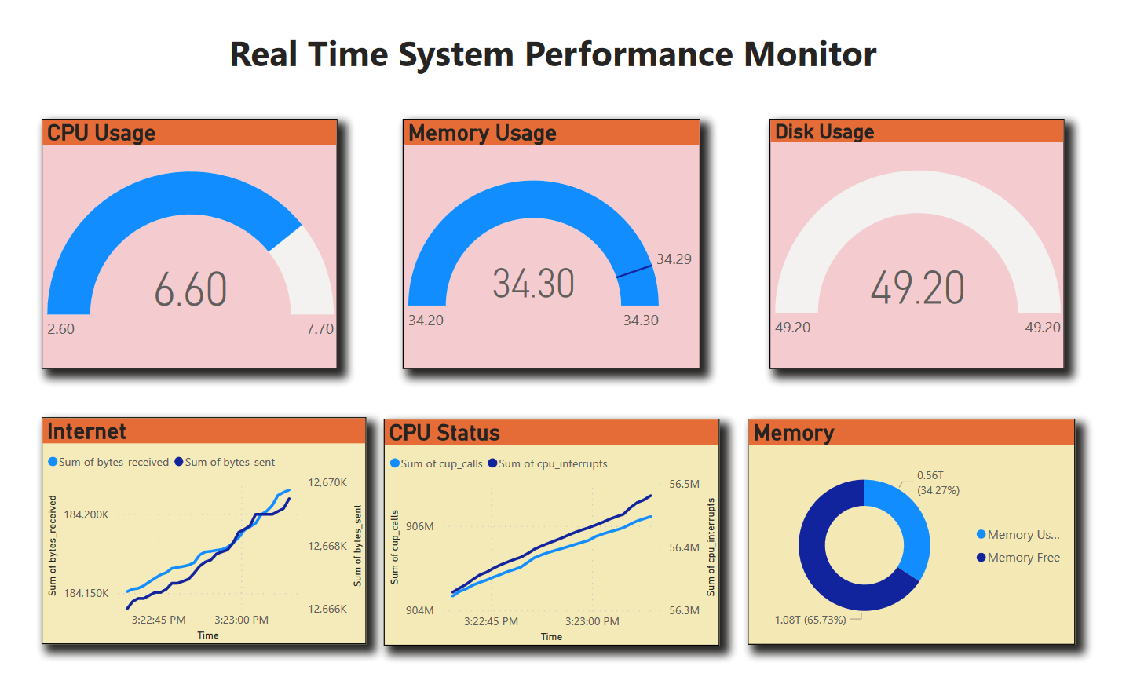

Using ETL Tool to Monitor Real Time System Performance and visualize it on Dashboard

Utilizing an ETL tool to monitor real-time system performance involves continuous data extraction, transformation, and loading into a centralized system for real-time analysis. This setup allows for the visualization of system metrics on an interactive dashboard, facilitating instant performance monitoring and decision-making. Such a tool helps in identifying bottlenecks, optimizing resource utilization, and ensuring system reliability. The combination of ETL processes and dashboard visualization enhances operational transparency and efficiency.

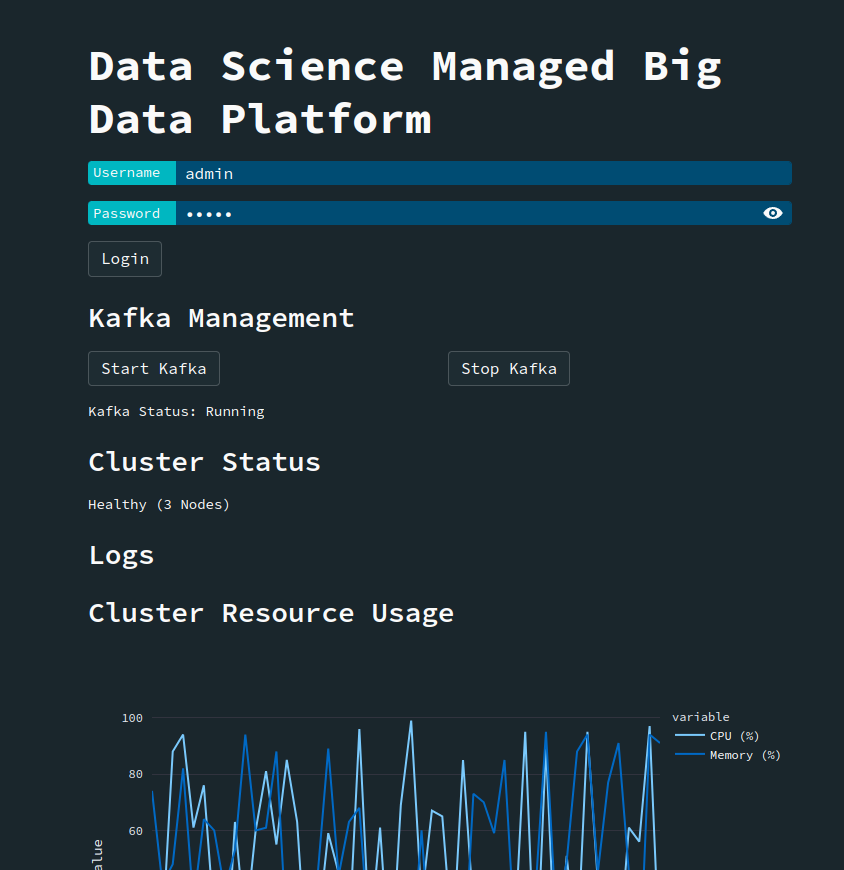

Development of Big Data Analytics Ecosystem

The development of a big data analytics ecosystem encompasses the integration of various tools and technologies to handle, process, and analyze large-scale data. This ecosystem includes data storage solutions, processing frameworks, and analytical tools designed to extract meaningful insights from vast datasets. By enabling comprehensive data analysis, it supports complex decision-making and drives innovation across industries. The creation of such an ecosystem is pivotal in harnessing the full potential of big data.

Development of Standalone Object Detection on Edge System

Developing a standalone object detection system on edge devices involves implementing efficient machine learning models that can run locally without reliance on cloud resources. This approach is crucial for applications requiring low latency and real-time processing, such as surveillance, smart home devices, and industrial automation. By enabling on-device computation, it reduces dependency on network connectivity and enhances data privacy. The advancement of edge-based object detection represents a significant step towards autonomous and decentralized intelligent systems.